Why AI governance

cannot wait.

By Lindsay Hiebert · Founder · CISSP

EU AI Act enforcement is 90 days away. Colorado is 60 days away. Cyber insurance renewals are asking now. Most mid-market organizations have no answer. This page lays out the regulatory cliff in primary-source detail and the artifact path that closes it.

AI governance is no longer a 2027 problem.

Three concrete enforcement events arrive within ninety days of this page, and each one establishes a pattern the rest of the world is following.

High-risk obligations enforce August 2, 2026

The EU AI Act (Regulation (EU) 2024/1689) entered into force on August 1, 2024 with a staggered enforcement schedule. The most consequential provisions — the obligations on high-risk AI systems under Annex III — become enforceable on August 2, 2026. Penalties exceed GDPR: up to €35 million or 7% of global annual turnover for prohibited practices, up to €15 million or 3% for non-compliance with high-risk obligations.

What enforces in 90 days

- Article 9 — documented, ongoing risk management system across the AI lifecycle

- Article 10 — training data governance, including bias assessment and provenance documentation

- Article 11 — technical documentation drawn up before market placement, retained for 10 years

- Article 12 — automatic recording of events (logs) over the lifetime of the system

- Article 13 — transparency to deployers, including instructions for use

- Article 14 — human oversight measures, designed in

- Article 15 — accuracy, robustness, and cybersecurity throughout the lifecycle

- Article 50 — transparency obligations including disclosure that users are interacting with AI, and labeling of synthetic content

Annex III high-risk categories include hiring and recruitment algorithms, credit scoring, biometric identification, critical infrastructure management, educational assessment, law enforcement tools, migration and border control, and AI affecting access to essential services. These are the categories most mid-market organizations either deploy directly or consume as embedded SaaS features.

First comprehensive U.S. state AI law — effective June 30, 2026

Colorado SB 24-205, signed May 17, 2024 and now effective June 30, 2026 (delayed from February 1), is the first comprehensive U.S. state AI law. It applies to developers and deployers of ‘high-risk artificial intelligence systems’ used in consequential decisions — employment, education, financial services, healthcare, housing, insurance, legal services, and government services.

What it requires of deployers

- Risk management policy and program governing the high-risk AI system

- Annual impact assessment of the high-risk system

- Consumer notification when AI makes or substantially factors in a consequential decision

- Public statement summarizing AI use and risk management practices

- Disclosure to the Attorney General within 90 days of discovering algorithmic discrimination

Affirmative defense: compliance with a recognized AI risk management framework (NIST AI RMF or ISO/IEC 42001) creates a rebuttable presumption of ‘reasonable care.’ This is the structural reason mid-market organizations need an AUP and a risk assessment artifact aligned to NIST RMF — it is the safe-harbor instrument.

NIST AI Risk Management Framework

NIST AI RMF 1.0 (released January 26, 2023) is voluntary in name and de facto required in practice. Federal regulators — FTC, CFPB, FDA, SEC, EEOC — reference NIST RMF principles in their AI enforcement guidance. The framework crosswalks formally to ISO/IEC 42001. The Colorado AI Act explicitly cites NIST RMF for affirmative-defense purposes. Federal contractors increasingly face NIST-aligned AI governance as a procurement requirement.

Structure: 4 functions (GOVERN, MAP, MEASURE, MANAGE), 19 categories, 72 subcategories. The Generative AI Profile (NIST AI 600-1, July 2024) adds 12 GenAI-specific risk categories and over 200 suggested actions. NIST has indicated an AI Agent Interoperability Profile is planned for Q4 2026.

CISO-relevant subcategories the SanctumShield report cites

- GOVERN-1.1 — legal and regulatory requirements involving AI are understood and managed

- GOVERN-1.4 — the risk management process is established and accountability for risk roles is documented

- MAP-4.1 — approaches for mapping third-party technology risks (relevant to MCP servers, A2A chains, embedded SaaS AI)

- MEASURE-2.7 — AI system security is evaluated and documented

- MANAGE-1.3 — residual risks are documented and accepted

The first international AI management system standard (December 2023). The same role for AI that ISO/IEC 27001 plays for information security. Increasingly seen in Fortune 500 procurement questionnaires.

§164.502(e): BAAs must explicitly cover AI services that process PHI. Embedded AI features (M365 Copilot, Workspace Gemini, Agentforce) need their own BAA addendum. §164.404: 60-day breach notification — an employee paste of PHI into a non-BAA AI tool can trigger the clock.

CC5.3: auditors will note exceptions where there is no AI Acceptable Use Policy. CC6.1 / CC7.2: AI tool access on personal devices and shadow AI traffic both fall in scope.

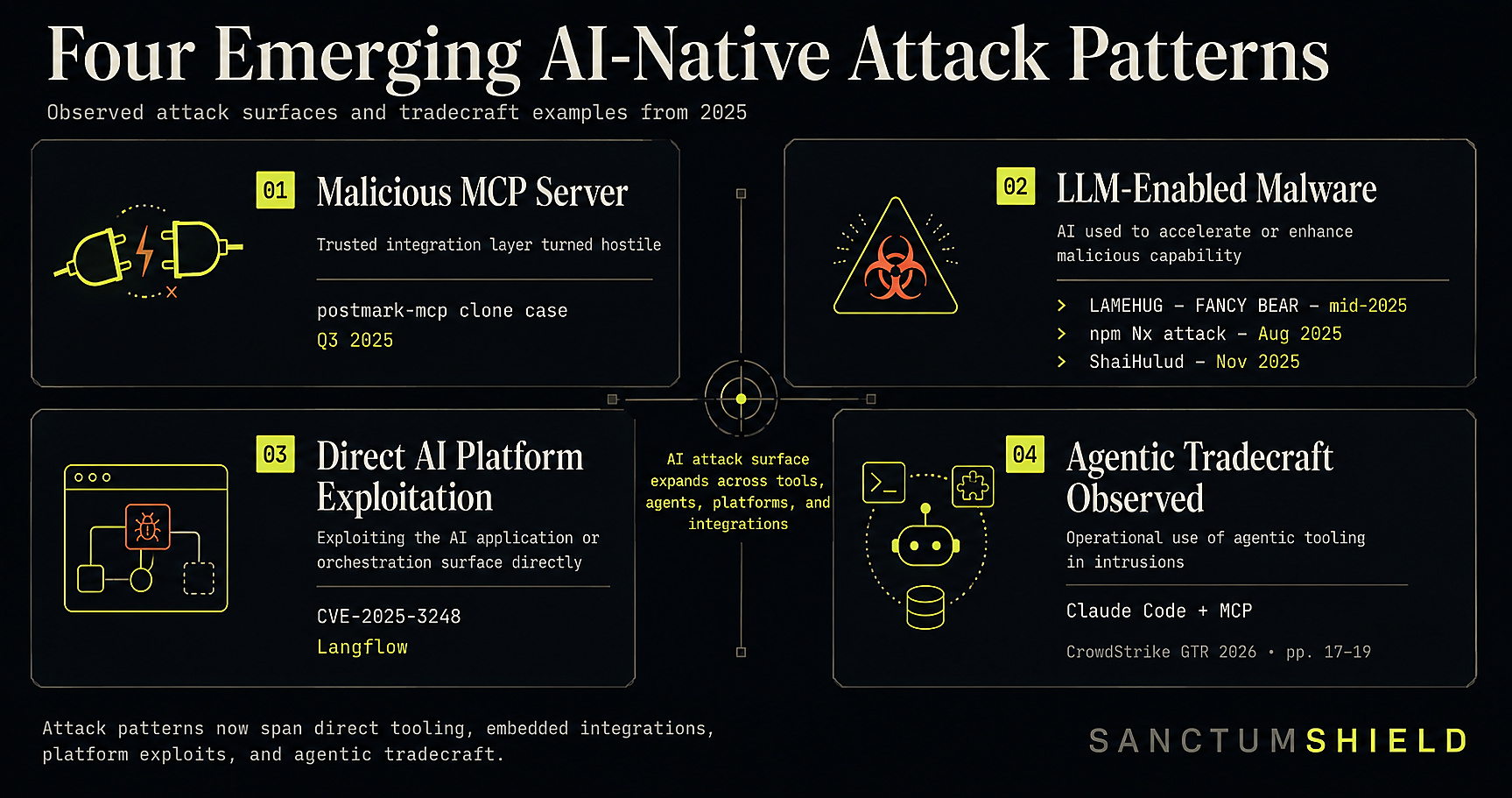

This is not theoretical. Adversaries are already operating in the agentic era.

The regulators wrote the law because the threat is real and quantified. Current published cybersecurity research from CrowdStrike — observed across trillions of telemetry events in 2025 — confirms that adversaries are already exploiting AI tools, agentic infrastructure, and the unmonitored Shadow AI surface most mid-market organizations have no governance evidence for.

- Malicious MCP servers — adversaries published

postmark-mcp, a clone of a legitimate Postmark MCP server, and forwarded users’ emails to attacker-controlled addresses (Q3 2025). - LLM-enabled malware — FANCY BEAR’s LAMEHUG embedded a Hugging Face-hosted LLM (Qwen2.5-Coder-32B) directly in the malware to generate reconnaissance commands at runtime (mid-2025); the npm Nx supply-chain attack used victims’ own Claude and Gemini CLI tools to generate credential-theft commands (Aug 2025); ShaiHulud, a self-propagating npm worm, compromised 690 packages by Nov 2025.

- Direct AI platform exploitation — CVE-2025-3248 in Langflow (a low-code AI agent platform) abused since April 2025 for ransomware deployment and credential access.

- Agentic AI tradecraft already observed — adversaries using Claude Code + MCP tools for minimally-supervised operations.

CrowdStrike Intelligence observed AI use across every phase of adversary operations rising sharply year over year:

Source: CrowdStrike, 2026 Global Threat Report (foreword, p3, p9, p11, p15–19, p32, p35, p39); CrowdStrike, Five Steps for Frontier AI Security Readiness (p4 “Zero Day Clock” chart based on 3,531 CVE-exploit pairs from CISA KEV, VulnCheck KEV, XDB). CrowdStrike requires registration to download both reports; SanctumShield publishes its content openly — no email gate, no friction, no cybersecurity education used as a sales lure.

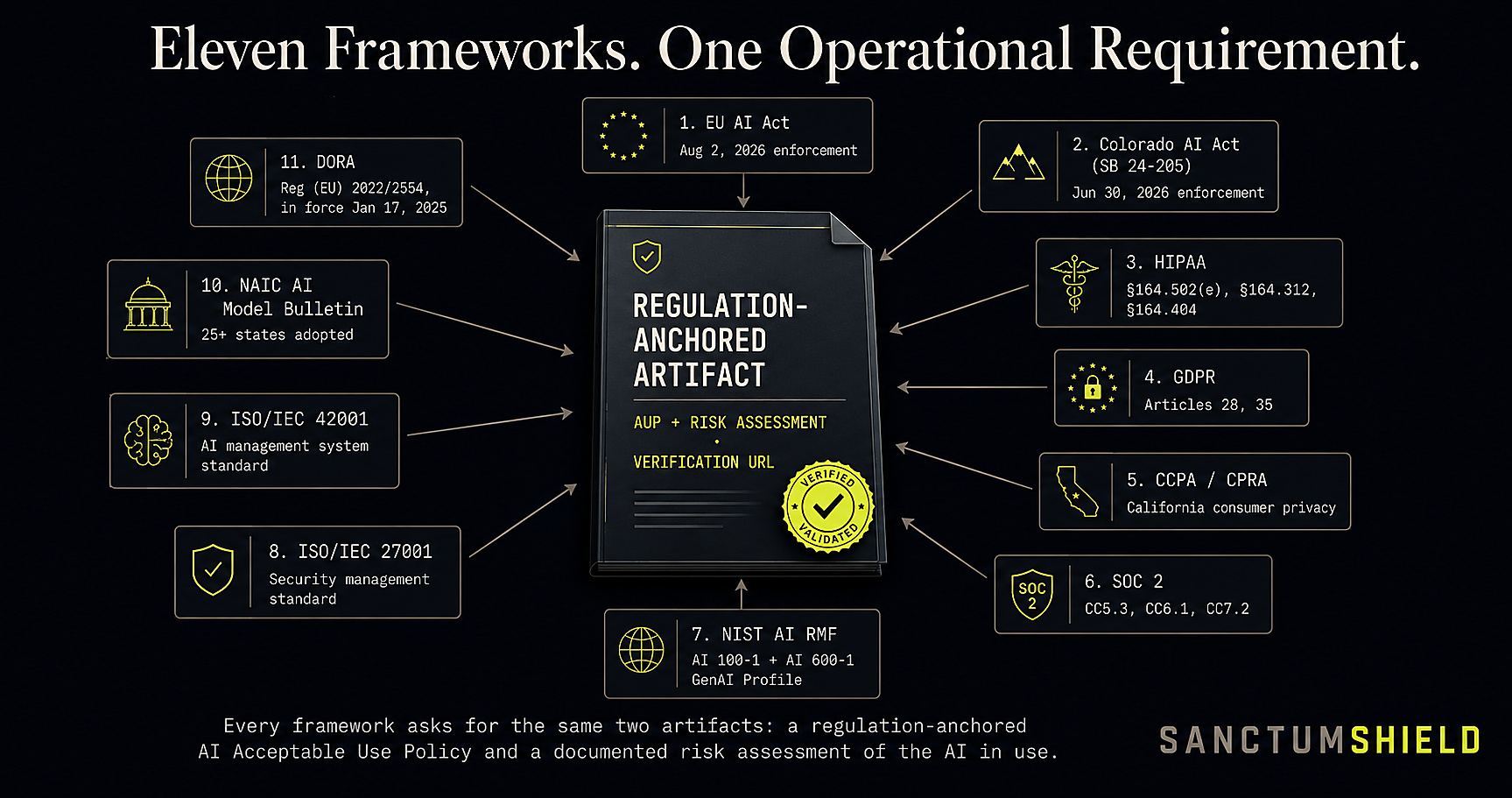

Each regulatory regime asks for a small set of evidentiary artifacts. SanctumShield produces them.

| Regulation | What it asks for | SanctumShield artifact |

|---|---|---|

| EU AI Act, Articles 9 + 11 | Risk management system; technical documentation retained 10 years | Executive Risk Report; AUP § 14 deployed-agent governance |

| EU AI Act, Article 12 | Automatic recording of events; tamper-evident expectation | Verification URL queryable for 5 years |

| EU AI Act, Article 50 | Transparency that AI is in use; labeling of synthetic content | AUP § 5.3 (patient-facing AI disclosure); AUP § 14.2 (agent registration) |

| Colorado AI Act | Risk management policy; annual impact assessment; consumer notification; AG disclosure | AUP + Risk Report. NIST RMF alignment provides affirmative defense |

| NIST AI RMF | GOVERN, MAP, MEASURE, MANAGE evidence across 72 subcategories | Findings cite specific subcategories (GOVERN-1.4, MAP-4.1, MEASURE-2.7) |

| ISO/IEC 42001 | AI management system: policy, roles, risk assessment, controls, audit | AUP + Risk Report = the policy and risk-assessment instruments |

| HIPAA § 164.502(e), § 164.312, § 164.404 | BAA coverage of AI sub-processors; technical safeguards; breach notification readiness | AUP § 5 healthcare restrictions; Risk Report flags BAA gaps and embedded AI exposure |

| SOC 2 CC5.3, CC6.1, CC7.2 | Documented AUP; logical access controls extending to AI tools; monitoring | Generated AUP closes CC5.3; Risk Report evidences CC7.2 monitoring |

| GDPR Articles 28 + 35 | Sub-processor disclosure; DPIA for high-risk processing | Tool registry doubles as sub-processor inventory; Risk Report flags missing DPIAs |

| Cyber insurance renewal | AI governance attestation; AUP existence; tool inventory; training evidence | All three artifacts — AUP, Risk Report, verification URL the underwriter can paste |

The structural cost of waiting until late 2026 is asymmetric.

The downside cases that surface in audit, board, or claim contexts share a pattern. All six are addressable today by a regulation-anchored AUP and a risk assessment artifact. That is the entire SanctumShield product.

EU AI Act enforcement triggers, including ad-hoc requests from National Competent Authorities for documentation under Article 11 and logs under Article 12. Fines scale with global turnover.

SOC 2 Type II auditors notating CC5.3 exceptions for absent AI Acceptable Use Policy. Exception language follows the company through subsequent renewal cycles and surfaces in customer DPAs.

Enterprise procurement questionnaires (SIG Lite, CAIQ, custom security reviews) increasingly request the AUP, AI tool registry, sub-processor list, and risk-assessment evidence. Inability to produce these blocks deals.

Cyber renewal questionnaires asking AI governance questions. ‘No, we don’t have an AUP’ contributes to premium increases, retention raises, or AI-specific carve-outs in the renewed policy.

D&O exposure for ‘failure to supervise AI use’ has surfaced in the 2025–2026 D&O cycle. Counsel guidance to boards now routinely asks: ‘what is our AI governance program’ — and the absence of an answer is itself a finding.

An employee paste of a customer contract or PHI into an unmanaged AI tool. The breach-notification clock starts. The first thing counsel asks for is the AUP that prohibited the action. Having no AUP increases both the legal exposure and the reputational damage.

Most existing AI governance documentation falls into one of three categories — and none of them is independently verifiable.

Downloaded from a vendor blog, edited lightly, signed by a CISO. Satisfies a checkbox but cites no clauses. Fails the first audit question that asks ‘how did you arrive at this control set.’

$50K–$250K, six months old, no log evidence, not refreshable, not queryable. Excellent for the moment of signature; useless six months later when the buyer’s AI tool stack has changed.

$15K–$40K, legally defensible at the moment of writing, immediately stale once a new AI tool is adopted, no observation, no machine-readable structure.

SanctumShield’s verification URL solves this directly. Every Executive Risk Report and AUP carries a unique URL queryable for five years. An auditor or insurance underwriter pastes the URL into a browser and immediately confirms when the report was generated, which AI model produced it, which AI endpoint registry version was used, and the company name. The contents are never exposed; verification only confirms the document is genuine and unaltered.

This maps directly to EU AI Act Article 12 evidentiary intent (automatic logging, tamper-evident expectation), to NIST AI RMF MEASURE-2.7 (documented security evidence), and to the cyber insurance underwriting reality that an artifact you cannot verify is an artifact you cannot price.

Three regulatory regimes — EU AI Act, Colorado AI Act, NIST AI RMF — reach the same operational requirement from three different directions.

Each one asks the deployer of AI to produce two artifacts: a regulation-anchored AI Acceptable Use Policy, and a risk assessment of the AI in use. Plus, increasingly, evidence that those artifacts are genuine.

Mid-market organizations — 50 to 2,000 employees — cannot reasonably afford the $50,000 to $250,000 consultancy engagement those artifacts have traditionally required. They need the same artifact at a price that makes sense for a 250-person company. They need it in ten minutes, not ten weeks. They need it customized to their industry, their jurisdictions, their actual AI tools — not a generic template. And they need it verifiable by the third parties who will read it.

That is what SanctumShield does. $99 a month. Month-to-month. Cancel anytime.

Every regulatory claim on this page is anchored to a primary source. If a CISO’s counsel asks where a citation comes from, the answer is here. Each URL below is a live, clickable hyperlink to the official government, ISO, AICPA, or NIST authority — no vendor-published interpretations.

Regulation (EU) 2024/1689 — the European Union’s comprehensive AI law, with high-risk obligations enforceable August 2, 2026. Penalties up to €35M or 7% of global turnover.

Senate Bill 24-205, signed May 17, 2024. Original effective date February 1, 2026, delayed to June 30, 2026. The first comprehensive U.S. state AI law.

AI RMF 1.0 (NIST AI 100-1) released January 26, 2023. Generative AI Profile (NIST AI 600-1) released July 26, 2024. Provides the affirmative-defense pathway for Colorado AI Act ‘reasonable care.’

AI Management System Standard, published December 2023. The first international management system standard for artificial intelligence.

45 CFR Parts 160 and 164. AI-relevant clauses: §164.502(e) (BAAs cover AI sub-processors), §164.312 (technical safeguards extend to AI tools handling PHI), §164.404 (breach notification).

AICPA Trust Services Criteria (TSC) 2017, updated 2022. AI-relevant Common Criteria: CC5.3 (control activities), CC6.1 (logical access), CC7.2 (system operations and monitoring).

Regulation (EU) 2016/679. Article 28 covers sub-processors (AI vendors qualify); Article 35 requires DPIAs for high-risk processing — covers most generative AI use of personal data.

Adversarial Threat Landscape for Artificial-Intelligence Systems — MITRE’s authority on AI-specific attacker tactics and techniques (prompt injection, model evasion, training-data poisoning, model extraction, supply-chain compromise, agent abuse). The reference framework for describing agentic-AI threat techniques in the same vocabulary security teams use for ATT&CK.

The globally-adopted knowledge base of adversary tactics, techniques, and procedures (TTPs) observed in real-world cyber intrusions. The reference SOC analysts, threat hunters, red teams, and security vendors use to describe attacker behavior in a common vocabulary.

The most-cited industry taxonomy of LLM and agentic AI security risks — prompt injection, insecure output handling, training-data poisoning, model DoS, supply chain vulnerabilities, sensitive information disclosure, excessive agency, overreliance, and agent-specific risks (excessive autonomy, identity spoofing, multi-agent orchestration risks).

The U.S. federal authority for Zero Trust architecture — the security model that treats every request as untrusted until authenticated and authorized. Foundational reference for governing non-human agent identities and the Shadow AI traffic that exploits implicit-trust legacy network models.

Looking for the consolidated authority table covering all 16 regulations, standards, and threat-model frameworks SanctumShield maps against? See the full Authoritative References table on the glossary →