A2A (Agent-to-Agent protocol)

Agent ProtocolsThe open standard for agents to discover and call each other

The protocol agents use to discover, authenticate to, and invoke one another. Became a Linux Foundation standard in 2025. Defines both the wire protocol and the Agent Card descriptor format. The “universal translator” layer of the agent stack — relevant for any organization using multi-agent frameworks (CrewAI, AutoGen, LangGraph, ADK).

A2A Discovery Governance Gap

SanctumShield Finding TypesMulti-agent framework in use without an agent-discovery boundary policy

SanctumShield finding type. Triggered when a customer indicates use of multi-agent frameworks (ADK, CrewAI, AutoGen, LangGraph) without an A2A discovery boundary policy in their AUP. Cites EU AI Act Article 13 (transparency for high-risk AI systems) and SOC 2 CC7.2 (monitoring of system operation).

Adversarial Validation

AI GovernancePolicyValidation by a second LLM from a different vendor, prompted to argue against the first

Validation performed by a second AI model — from a vendor different from the one producing the output being validated — prompted to argue against the primary model’s recommendation. Distinct from conversational pushback, which Randazzo et al. (2025) shows triggers persuasion escalation rather than correction. SanctumShield’s AUP clause PEV-001 prescribes adversarial validation as one of three acceptable validation methods (alongside independent recomputation and structured disconfirmation prompts) for outputs from consequential AI workflows. The vendor-diversity requirement matters: a Claude-validating-Claude or Gemini-validating-Gemini check is structurally subject to the same persuasion-bombing failure mode.

Agent Card

Agent GovernanceAgent ProtocolsA cryptographically signed descriptor an agent publishes about itself

Part of the A2A standard. An agent’s “business card” — declares identity, capabilities, available tools, security schemes, and OAuth scopes. Cryptographically signed so another agent can verify what it’s talking to before making the first call. Discovery Poisoning attacks exploit agent cards that change between discovery and runtime.

Agent Gateway (Pillar 2)

Agent GovernanceAir traffic control for every agent call

Pillar two of Google’s Agent Platform — an Envoy-based data plane that intercepts every agent ingress and egress call and enforces policy at the wire. Performs deep parsing of JSON-RPC bodies (MCP and A2A traffic) so semantic policies can be enforced before the call leaves the perimeter.

Agent Governance

Agent GovernanceAI GovernanceThe category of governing agents, MCP servers, A2A discovery, and shadow AI

The umbrella category Google formalized at Cloud Next '26 — covering AI agents, MCP servers, agent-to-agent (A2A) discovery chains, and shadow AI tools that don’t fit a traditional API gateway, SIEM, IAM, or GRC platform. The superset that subsumes the older “AI governance,” “shadow AI,” and “AI-SPM” framings.

Agent Identity (Pillar 3)

Agent GovernanceAgent IdentityCryptographic, ephemeral, scoped identity for each agent

Pillar three of Google’s Agent Platform — agents are first-class principals with their own identity class, distinct from human users and traditional service accounts. Built on SPIFFE / Managed Workload Identity. Internal agent calls operate under assumed-trust mTLS; external calls require zero- trust verification on every request.

Agent Identity Model Gap

SanctumShield Finding TypesAgents authenticate with shared keys, not cryptographic ephemeral identity

SanctumShield finding type. Triggered when deployed agents authenticate using long-lived service account credentials, shared API keys, or embedded secrets, rather than the SPIFFE- based ephemeral, scoped, attestable identity Google’s Pillar Three architecture defines. Cites NIST AI RMF MANAGE-1.3 and NIST SP 800-63 adapted for non-human identities.

Agent Observability (Pillar 5)

Agent GovernanceUnified telemetry across multi-agent swarms

Pillar five of Google’s Agent Platform — uses OpenTelemetry GenAI conventions plus A2A trace headers to correlate every LLM prompt, A2A handoff, and tool call under a single Application ID. Required infrastructure for any audit that needs to reconstruct what an agent decided and why (relevant to EU AI Act Article 13 transparency obligations).

Agent Registry (Pillar 1)

Agent GovernanceA unified catalog of every agent and MCP server in the enterprise

Pillar one of Google’s Agent Platform — the answer to “what agents do we actually have?” Eliminates Shadow AI by enforcing a separation between visibility (the full catalog) and access (the per-application least-privilege boundary an agent actually sees at runtime). Visibility does not equal access.

Agent Skills

Agent GovernanceAgent ProtocolsAn open standard for describing what an agent can do

Anthropic-originated open standard (October 2025, open-sourced December 2025) for describing agent capabilities in a structured machine-readable format. Used alongside MCP and A2A to complete the modern agent component model: LLM + retrieval context + Agent Skills + MCP + A2A.

Agent-as-Developer

Agent GovernanceRisk & AssessmentThe pattern where AI agents author production code and a human manager reviews agent attestations rather than the code itself

Pattern demonstrated publicly by Google at Cloud Next '26 (“Build AI Agents with Agents: Multi-Agent PR Roaster” and the ADK + Multi-Agent Patterns sessions). A coding agent is delegated a task end-to-end — scaffolding, code generation, evaluation, deployment, observability — with the human engineer reviewing the agent’s attestations and final artifact rather than the underlying code. Introduces a new governance question: where does the coder-agent get its policy and security guardrails from, and who owns the policy chain when the human reviewer never reads the code?

Agent-Authored Code Governance Gap

SanctumShield Finding TypesAgent GovernanceWhen a coding agent produces production code with no documented policy chain or change-management evidence

SanctumShield finding type. Triggered when a customer’s engineering organization has adopted coding agents (Cursor agents, Claude Code, Gemini CLI, Anthropic Antigravity, Google’s google-agents-cli, GitHub Copilot Workspace) without a documented policy answering: (1) where does the coder-agent get its policy and security context from, (2) who is the named human accountable for code the agent produced, (3) what change-management evidence is captured when an agent commits or deploys. Cites NIST AI RMF MANAGE-1.3 (post-deployment monitoring), SOC 2 CC8.1 (change management), and EU AI Act Article 14 (human oversight).

Agent-Driven SSRF

Threat & Attack PatternsServer-side request forgery, but the server is an agent interpreting natural language

SSRF where the attacker doesn’t need to craft an HTTP request — they just need to convince an agent (via a prompt or consumed content) that it should fetch a URL. Internal cloud metadata endpoints (the AWS ECS task metadata service at 169.254.170.2 for example) are canonical targets.

AI Acceptable Use Policy (AUP)

AI GovernancePolicyThe written policy that tells employees what AI they can use, and how

A formal document describing which AI tools employees are allowed to use, on what data, for what purposes, and under what controls. A production-grade AUP contains approximately fourteen sections (scope, permitted use, prohibited use, data handling, vendor review, training data restrictions, incident reporting, sanctions, review cadence, and legal review), customized to the company’s industry, jurisdictions, and applicable frameworks, and signed by leadership. Cyber insurance renewals, SOC 2 audits, HIPAA reviews, and enterprise procurement cycles now routinely ask for the AUP as evidence.

AI Application (Unit of Management)

Agent GovernanceThe bounded swarm of agents and tools that delivers a business capability

Google’s reframing introduced at Cloud Next '26 — the AI application, not the individual agent or model, is what gets governed. Policies attach to the application boundary; cost attribution rolls up to the application; the kill-switch operates at the application level. A single agent cannot be governed in isolation.

AI Application Perimeter Gap

SanctumShield Finding TypesGovernance attached to hosts/containers — not to the AI application boundary

SanctumShield finding type. Triggered when an organization governs agents individually rather than as bounded AI applications. Policy attached to hosts or containers cannot scale to multi-agent systems where the meaningful unit of governance is the application, not the agent. Cites NIST AI RMF MAP-4.1 and ISO 42001 Clause 9.

AI-First SOC

SecurityOperating model where AI agents handle tier-1 work and humans focus on judgment calls

Industry framing for security operations centers restructured around agent-led tier-1 detection, triage, and hunting — with humans focused on judgment calls, novel investigations, and policy decisions. The model assumes the governance-artifact layer (AUP, risk register, policy attestation) is solved elsewhere, which is the gap SanctumShield fills above the SOC tier.

AI-risk-ready-proof AUP

AI GovernancePolicyAn AUP that is customized, regulation-anchored, and defensible

A term we use on this site to distinguish a real AUP from a generic template. An AI-risk-ready-proof AUP cites the actual clauses of HIPAA §164.502(e), SOC 2 CC6.1, EU AI Act Article 5, NIST AI RMF GOVERN-1.4, and GDPR Article 28 in the specific sections those clauses govern; is customized to a specific company’s industry and jurisdictions; and can be handed to a regulator, a board, a SOC 2 auditor, or an enterprise procurement reviewer without edits.

AI-SPM (AI Security Posture Management)

SecurityThe 2024–2025 industry term for runtime AI security posture tools

The earlier framing for products like Wiz AI-SPM, Palo Alto AI Access Security, and Cisco AI Defense — focused on runtime protection of deployed AI workloads. Largely superseded in 2026 by the broader “Agent Governance” framing, which adds policy-artifact production and coverage of shadow AI on top of the runtime protection layer.

AiTM (Adversary-in-the-Middle) Phishing

SecurityAI GovernanceRisk & AssessmentPhishing kits acting as real-time reverse proxies to legitimate Microsoft / Google login pages — bypasses URL detection, captures session tokens

A phishing technique in which the attacker’s landing page is a transparent reverse proxy to the legitimate identity provider login (Microsoft Entra ID, Google Workspace, Okta). The victim sees and authenticates against the real Microsoft login URL; the proxy captures the credential and the resulting session token in real time, then replays the legitimate authentication flow so the victim sees no failure. Because the victim only ever interacts with the genuine provider domain, traditional URL-based phishing detection does not fire. Authority: CrowdStrike, 2026 Global Threat Report (p44). Reference cases: ShinyHunters, IMPERIAL KITTEN (EvilGinx2 against Israeli Microsoft 365 users), COZY BEAR (OAuth 2.0 device-code flow abuse against U.S.-based NGOs).

Governance angle: a regulation-anchored AUP must require phishing-resistant MFA (FIDO2 / WebAuthn / passkeys) — not SMS, not push-notification approve, not TOTP — because every flow that issues a session token to the browser is replayable through an AiTM proxy. The AUP must also govern OAuth and Entra ID app-consent policies (limit which third-party apps users can consent to, require admin-mediated consent for sensitive scopes), conditional access bound to managed device or compliant network state, and continuous monitoring of impossible-travel and atypical token-issuance patterns. The Shadow AI overlap: many AiTM targets are Microsoft 365 + embedded AI features (Copilot, Purview AI), and stolen tokens grant attackers the same AI-powered access the legitimate user holds.

API Gateway

SecurityNetwork layer that mediates and enforces policy on HTTP/REST API traffic

A category of products including Apigee, Kong, AWS API Gateway, and Mulesoft. Built for deterministic REST/HTTP API traffic. They parse HTTP headers and apply rate limits, authentication, and routing rules. Structurally insufficient for agent governance because agent traffic lives in JSON-RPC bodies (MCP and A2A), not HTTP headers, and because policy at the prompt level is semantic, not syntactic.

BAA (Business Associate Agreement)

Compliance FrameworksVendorsHIPAA-required contract with any vendor handling PHI

A written agreement that a HIPAA-covered entity signs with any vendor (a “business associate”) that will handle Protected Health Information. The BAA spells out the vendor’s permitted uses, security obligations, and breach notification duties. For AI governance, any AI vendor that will process PHI needs a BAA. Most free-tier AI services will not sign one; paid enterprise tiers usually will.

Board-ready

Risk & AssessmentAn artifact written for non-technical executive readers

A document or report that can be presented to a company’s board of directors without editing. Characterized by a clear headline, executive summary, risk score, business impact, and prioritized action plan — not raw logs, not control lists, not compliance checklists. “Board-ready” is a specific target audience (audit committee, general counsel, non-technical directors) with specific reading habits.

Brownfield Integration

Agent GovernanceThe pattern where every agent application has both an AI face and a legacy API face

Google’s framing for how agents coexist with legacy enterprise systems. Each agent application typically exposes two interfaces: an AI-facing A2A/MCP interface for other agents, and a legacy-facing REST API for traditional services. Both interfaces need to be governed — that’s the basis for SanctumShield’s Dual-Interface Governance Gap finding.

Build agents with agents

Agent GovernanceGoogle's framing for the agent development lifecycle in 2026

Direct quote from Google’s Cloud Next '26 ADK session (“we expect you guys to build agents with building agents”). The framing positions agent construction itself as an agent task: a coding agent uses opinionated CLI skills to scaffold, code, evaluate, deploy, publish, and observe new agents. The full development lifecycle compresses from months to minutes, but the policy chain that governed the original human-developer workflow does not automatically transfer.

CAIQ

Vendor RiskCloud Security Alliance Consensus Assessments Initiative Questionnaire

A vendor questionnaire specifically for cloud service providers, published by the Cloud Security Alliance. Roughly 300 questions covering 17 control domains. Often used alongside or in place of SIG Lite for SaaS vendor assessment. Shares the same structural limitations — self-reported, point-in-time, vendor-scoped.

CCPA / CPRA

Compliance FrameworksCalifornia Consumer Privacy Act, amended by the California Privacy Rights Act

California’s consumer data privacy law, substantially strengthened by the CPRA amendments in 2023. Establishes rights to know, delete, correct, and opt out of sale/sharing of personal information. CPRA adds a new category — “sensitive personal information” — and creates the California Privacy Protection Agency as the enforcement body.

CEL (Common Expression Language)

PolicyThe expression language Google IAM uses for policy conditions

Google’s expression language for writing policy conditions — example: api.getAttribute('mcp.tool.isOpenWorld', false) == false. Powerful and deterministic, but written for platform engineers — not the auditor-readable natural-language policy a CISO would sign and a regulator would expect.

Claude Mythos

AI GovernanceAgent Governancefrontier-aiRisk & AssessmentAnthropic's frontier general-purpose model with strikingly capable computer-security ability — released April 7, 2026 through Project Glasswing as a careful, partner-trusted advance for defensive cybersecurity

A frontier-class general-purpose language model from Anthropic that represents a significant advance in defensive cybersecurity capability. Announced

April 7, 2026 at red.anthropic.com and released as Claude Mythos Preview through

Project Glasswing — Anthropic’s partner program for using Mythos to “help secure the world’s most critical software.” Anthropic positions Mythos as a general-purpose model that is “strikingly capable at computer security tasks”. Anthropic’s deliberate, partner-trusted rollout reflects a careful and measured posture toward releasing leading-edge capability — exactly the kind of disciplined deployment the cybersecurity field benefits from as frontier capability accelerates. We applaud Anthropic’s leadership here, and we recognize that much about the program’s scope, criteria, and roadmap is still emerging.

What it does (Anthropic-reported, AISI-validated): identifies vulnerabilities, generates working exploits, and chains attack steps autonomously inside controlled environments. Anthropic’s own announcement reports Mythos finding a 27-year-old OpenBSD vulnerability and a 16-year-old FFmpeg flaw, plus ~300 vulnerabilities in Firefox where Claude Opus 4.6 found ~20. The

UK AI Safety Institute’s independent April 2026 evaluation reported 73% success on expert-level Capture-the-Flag challenges and autonomous completion of full 32-step corporate-network attack chains in 3 of 10 runs, in test environments AISI noted were “weakly defended” without active defenders.

Who has access: ~50 organizations are on the Glasswing partner list — including founding partners Amazon Web Services, Anthropic, Apple, Broadcom,

Cisco,

CrowdStrike, Google, JPMorganChase, Linux Foundation, Microsoft, NVIDIA, and

Palo Alto Networks, plus other access-agreement organizations. CrowdStrike’s founding-member announcement frames the division of responsibility cleanly:

“Anthropic builds the model. CrowdStrike secures AI where it executes.” The partner-trusted approach reflects Anthropic’s judgment that responsible deployment of capability at this level is best advanced through coordinated work with security-focused organizations that can apply it to defensive use cases.

Why measured access: Anthropic states Mythos is “intentionally not being made generally available” because it “could potentially pose a cybersecurity threat if weaponized by bad actors to find bugs and exploit them rather than fix them” (TechCrunch, April 7, 2026). On

May 5, 2026, Anthropic CEO Dario Amodei told CNBC the industry has a “

six-to-twelve-month window to patch tens of thousands of software vulnerabilities” — a candid, on-the-record framing of the urgency that motivates the Glasswing program. We share that view of the urgency, and we support the careful posture Anthropic is taking.

Government engagement: per

Wall Street Journal reporting (April 30, 2026), the White House and Anthropic have engaged on the scope and pace of expanding Glasswing access. The UK AISI has published its independent evaluation. India’s SEBI has convened a task force on frontier-model cyber implications for Indian financial institutions. This kind of public-private engagement is appropriate for capabilities at this level.

The broader 2026 frontier-AI ecosystem. Mythos sits within a fast-moving global ecosystem of capable models. The UK AISI separately reported OpenAI’s GPT-5.5 achieving comparable results on autonomous-attack benchmarks; DeepSeek R1 has been reported to perform competitively on bug-detection benchmarks; and an open-source reconstruction project named

OpenMythos has attracted 10,000+ GitHub stars. The straightforward implication for governance teams: organizations should plan on the assumption that frontier-class capability will continue to emerge from multiple sources globally, and shape their governance posture accordingly.

Frontier defensive AI is a meaningful innovation. It does not replace governance. Mythos, GPT-5.5, the Wiz agentic-AI announcements at Google Cloud Next ’26, and the broader 2026 wave of frontier defensive tooling all give skilled security teams faster vulnerability discovery, better attack-path mapping, accelerated triage, and more comprehensive operational scoping. They are valuable contributions to the cybersecurity defender’s toolkit. They are not a substitute for the governance discipline that surrounds the toolkit.

An AI agent can scope an audit, automate inventory discovery, and accelerate analysis. It cannot make the decisions, sign the policy, brief the board, or hold the accountability. Governance — what the organization permits, what it prohibits, what it audits, what it documents, and what it certifies to regulators, underwriters, and auditors — remains a uniquely CISO and executive responsibility under Due Care and Due Diligence standards. Automation supports that responsibility; it does not replace it.

Governance posture for the mid-market CISO (the SanctumShield POV): the work the CISO and executive team have to do is the same whether their organization is on the Glasswing partner list or not. Maintain a current AI tool registry. Publish a regulation-anchored AUP that addresses agent identity, MCP server trust verification, BYOD AI authentication, and non-human identity governance. Run a continuous Shadow AI risk audit on a cadence aligned with the new 10-hour Time-to-Exploit. Produce a third-party-validatable verification artifact every regulator, underwriter, and auditor can confirm. SanctumShield is built to make that governance discipline accessible — at $99/month, refreshed monthly — so the underserved mid-market can satisfy its compliance obligations and demonstrate Due Care and Due Diligence regardless of which frontier defensive tooling its operational SOC has access to. Anthropic and the Glasswing partners advance the leading edge; SanctumShield ensures the broader market can still meet the standard. The two efforts complement each other.

Cloud Detection and Response (CDR)

SecurityThe runtime-detection-and-response category for cloud workloads

Gartner-recognized category for products that detect and respond to active threats in cloud environments — distinct from CSPM (which scans for misconfigurations) and CWPP (which protects workloads). Wiz Defend, CrowdStrike Falcon Cloud Security, Palo Alto Prisma Cloud Defender, and Sysdig all compete here. CDR addresses the runtime detection layer; SanctumShield addresses the governance-artifact layer above it.

CNAPP (Cloud-Native Application Protection Platform)

SecurityAll-in-one cloud security platform combining CSPM, CWPP, DSPM, CIEM, and code scanning

A category dominated by Wiz, with competitors including Palo Alto Prisma Cloud, Orca Security, and Lacework. Protects cloud-native workloads at runtime. Wiz extended into Agent Governance with its Red / Blue / Green agent triad in early 2026. Excellent for engineering teams that need runtime protection of deployed AI agents — see /vs-wiz for the SanctumShield positioning relative to this category.

Coder-Validator Agent Pair

Agent GovernanceTwo-agent pattern: one writes the code, another reviews it before merge

Reference architecture for agent-led development: a coder agent produces a change, a validator agent (often using the same or a peer LLM) evaluates it against tests and policy before submission to a human. The pair pattern reduces the rate of obviously bad code reaching humans, but does not address the underlying governance question — the validator is itself non-deterministic, and “who supervises the validator?” is a recursion problem (compare Evaluator Oversight Gap).

Coding Agent

Agent GovernanceAn AI agent that writes, edits, tests, and deploys application code

Examples in 2026: Cursor agents, Claude Code, Anthropic Antigravity, Gemini CLI, GitHub Copilot Workspace, Google’s google-agents-cli. Distinct from autocomplete-style assistants because the coding agent operates across the full development lifecycle and can run shell commands, install dependencies, deploy to production, and trigger CI/CD. The runtime IAM the coding agent holds at authoring time is typically broader than what the deployed code itself runs under — and the boundary between those scopes is rarely documented.

Colorado AI Act (SB 24-205)

Compliance FrameworksFirst comprehensive U.S. state AI law · effective 30 June 2026

Colorado Senate Bill 24-205, signed into law 17 May 2024 and effective 30 June 2026 (delayed from 1 February 2026). The first comprehensive U.S. state AI regulation, applying to developers and deployers of high-risk artificial intelligence systems used in consequential decisions — employment, education, financial services, healthcare, housing, insurance, legal services, and government services. Deployers must implement a risk management policy, complete an annual impact assessment, notify consumers when AI makes a consequential decision, publish a summary of AI use, and disclose discovered algorithmic discrimination to the Colorado Attorney General within 90 days. Affirmative defense: compliance with NIST AI RMF or ISO/IEC 42001 creates a rebuttable presumption of ‘reasonable care.’ Note: Colorado SB-189 (May 2026) may reshape the law before enforcement; SanctumShield artifacts remain compliant under either framework.

Confused Deputy

Threat & Attack PatternsAttacker tricks an agent into using its own credentials against a target

Classic security attack pattern that’s especially dangerous for agents. The attacker embeds instructions in content the agent will consume (a PDF, image with hidden text, email attachment, web page) that cause the agent to act on the attacker’s behalf using its own legitimate credentials, against a legitimate target. The agent is “confused” about whose instruction it’s following.

Control checklist

Risk & AssessmentA yes/no inventory of security controls

A list of security controls against which an organization is evaluated as “in place” or “not in place.” Useful for SOC 2 audit preparation and compliance automation. Insufficient as a risk assessment, because knowing you have a control does not tell you whether the control is adequate for your actual risk exposure.

Conversational Validation (deprecated control)

AI GovernancePolicyAsking the same AI follow-up questions to verify its output — documented ineffective

The practice of validating an AI output by engaging the same AI in dialogue — asking “are you sure?”, requesting elaboration, pushing back with specific objections. Now documented as ineffective by Randazzo et al. (2025), because the AI responds with persuasion bombing rather than correction. SanctumShield’s AUP generator now classifies conversational validation as a deprecated control and prescribes adversarial second-model review, independent recomputation, or disconfirmation prompts instead. See Persuasion Bombing.

Data residency

Data HandlingCompliance FrameworksWhich country's servers your data is stored on

A contractual or regulatory requirement that customer data remain within a specific geographic region (the EU, the US, Canada, etc.). For cloud services this usually means pinning storage and sometimes compute to specific regions. GDPR Chapter V imposes restrictions on transfers of personal data outside the EU. Data residency is a frequent friction point with AI services because model inference often happens in a different region than model training.

Disconfirmation Prompt

AI GovernancePolicyA structured prompt that forces an AI to argue against its own recommendation before acceptance

A specific prompt pattern that requires an AI to articulate the strongest counter-argument against its own recommendation before the output is accepted by a human reviewer. The structured form defeats the natural tendency of LLMs to default to agreement and credibility-projection. One of the three prescribed validation methods under SanctumShield AUP clause PEV-001. Used in concert with adversarial validation, not as a replacement.

Discovery Poisoning

Threat & Attack PatternsFirst MCP/A2A discovery returns valid tools — subsequent calls return malicious ones

A specific case of the Rugpull Effect, called out by Google engineers at Cloud Next '26 BRK2-015. The first connection to an MCP server or A2A target returns a clean list of tools. The agent inherits trust. Subsequent calls return attacker- modified tools — but the agent doesn’t re-verify, and keeps using the poisoned discovery results.

DLP (Data Loss Prevention)

SecurityTechnology that tries to stop sensitive data from leaving the organization

A category of security tools that inspect outbound content (email, file transfer, web traffic) for sensitive data patterns (credit cards, Social Security numbers, classified labels) and block or flag violations. Struggles with AI because modern AI traffic is encrypted HTTPS and the “sensitive data” is indistinguishable from any other text payload. Traditional DLP sees api.openai.com and stops there.

DORA (Digital Operational Resilience Act)

Compliance FrameworksEU Regulation 2022/2554 · in force 17 January 2025

The European Union’s Digital Operational Resilience Act, in force since 17 January 2025. Applies to financial entities operating in the EU — banks, investment firms, insurers, payment institutions, crypto-asset service providers — and to their critical third-party ICT providers. Requires comprehensive ICT risk management, third-party ICT risk oversight (including AI tools and AI service providers), incident reporting, digital operational resilience testing, and information sharing on cyber threats. AI tools used in financial services are explicitly in scope as ICT third parties. Sits alongside the EU AI Act and creates overlapping obligations for high-risk AI in financial services. Financial-services customers using SanctumShield satisfy DORA’s third-party AI inventory and risk assessment requirements through the AI tools registry and Executive Risk Report.

DPA (Data Processing Agreement)

Data HandlingCompliance FrameworksThe contract governing how a vendor processes your personal data

A contract required by GDPR Article 28 between a data controller (the customer) and a data processor (the vendor) that spells out the subject matter, duration, nature, purpose, data types, and obligations of the processing. Analogous to a HIPAA BAA but for GDPR-governed personal data. Most serious B2B SaaS vendors publish a standard DPA and will sign customer DPAs on request.

Dual-Interface Governance Gap

SanctumShield Finding TypesAgent app exposes both an AI face and a legacy REST API — only one is documented

SanctumShield finding type rooted in Google’s Brownfield Integration pattern. Triggered when a deployed agent application exposes both an A2A/MCP interface and a legacy REST API but security controls have only been documented for one face. API security gaps (auth, authz, rate limiting, input validation) commonly remain on the legacy side. Cites NIST AI RMF MAP-4.1 and OWASP API Security Top 10 (2023).

Due Care

legalRisk & AssessmentThe legal standard for putting reasonable cybersecurity safeguards in place

The cybersecurity-and-legal standard requiring an organization to act as a reasonable, prudent person would in implementing safeguards proportional to the risk. For Shadow AI, an organization meeting Due Care can show a published AI Acceptable Use Policy, a documented risk assessment of the AI in use, identified controls with assigned ownership, and board-level acknowledgment of AI risk. Failing Due Care exposes the organization to negligence findings in regulator inquiries, cyber-insurance claims disputes, breach litigation, and SOC 2 exception findings — and the CISO is the person the record names.

Due Diligence

legalRisk & AssessmentThe legal standard for continuously verifying that Due Care is maintained

The companion standard to Due Care: not just whether reasonable safeguards were put in place at one point in time, but whether the organization continuously monitors, re-assesses, and updates those safeguards as the risk surface changes. For Shadow AI, Due Diligence means re-running the audit as new AI providers, embedded SaaS AI features, and autonomous agents enter the environment, and refreshing the AUP and risk assessment when regulations change. SanctumShield’s monthly registry refresh and $99/month subscription model are designed to make Due Diligence structurally feasible for organizations of 50–2,000 employees — so the CISO is not forced to choose between a $50K Big 4 snapshot and no evidence trail at all.

EDR (Endpoint Detection and Response)

SecurityAgents installed on laptops/servers that detect attacks

Security agents that run on endpoint devices, monitor process and network behavior, and detect suspicious activity. CrowdStrike Falcon, SentinelOne, and Microsoft Defender for Endpoint are leading examples. EDR agents can see some shadow AI (desktop apps, browser extensions) but miss most of it (browser-based SaaS accessed through normal web traffic).

Effective Human Oversight

AI GovernanceCompliance FrameworksThe standard required by EU AI Act Article 14 — and the standard SanctumShield specifies how to meet

The control standard required by EU AI Act Article 14, Colorado SB 24-205, NIST AI RMF’s GOVERN function, and ISO/IEC 42001 Clause 8.3. The regulations assume that a human reviewing AI output is itself an effective control. Randazzo et al. (2025) has invalidated that assumption for conversational review. SanctumShield’s position: “effective” requires controls beyond conversational pushback — namely adversarial validation, independent recomputation, or disconfirmation prompts, codified in generated AUP clauses PEV-001 through PEV-005. See Persuasion Bombing and Theatrical Oversight.

Egress Mediation Gap

SanctumShield Finding TypesDeployed agents have no consistent gateway controlling outbound calls

SanctumShield finding type. Triggered when an organization has deployed agents without any consistent gateway, proxy, or sidecar mediating outbound tool calls and A2A traffic — meaning no single point at which egress policy can be enforced or observed. Cites NIST AI RMF MAP-4.1 and NIST SP 800-207 (Zero Trust Architecture).

Ethos / Logos / Pathos Tactics

AI GovernanceRisk & AssessmentAristotle's three rhetorical modes — the taxonomy Randazzo et al. used to classify 14 LLM persuasion tactics

The three rhetorical modes Aristotle named in Rhetoric: ethos (credibility appeals — apologizing, correcting, effort transparency, hedging, deflecting), logos (logical appeals — agreeing and counter-arguing, comparing, data-insight overlays, problem-solution structuring), and pathos (emotional appeals — affirming, energizing, inclusivity language, mirroring, visioning). Randazzo et al. (2025) used this taxonomy to classify 14 specific persuasion tactics observed in GPT-4 outputs to validation challenges. The taxonomy is the foundation of SanctumShield’s research vocabulary; the tactics themselves are detectable in transcript analysis.

EU AI Act

Compliance FrameworksThe European Union AI Act — the first major AI-specific law

Adopted in 2024, phased into force through 2026. Classifies AI systems into four risk tiers: unacceptable (prohibited), high-risk (subject to extensive obligations), limited-risk (transparency obligations), and minimal-risk. Article 5 prohibits specific practices (social scoring, biometric inference in public spaces, dark-pattern manipulation). High-risk AI systems face conformity assessment, technical documentation, and post-market monitoring obligations.

Evolutionary Chasm (N=1 vs N=100)

Agent GovernanceRisk & AssessmentGovernance is linear in agent count — risk is quadratic in interactions

Google’s framing for the gap between demo-stage agent deployments (one agent, isolated) and production-stage deployments (a hundred agents in one application interacting via A2A and MCP). Quote: “Productizing multi-agent systems is 100× harder. Massive Shadow AI sprawl.” The case for explicit governance over informal policy.

Fake CAPTCHA Lure

SecurityAI GovernanceRisk & Assessment2025's fastest-growing social-engineering pattern — fake "verify you're human" pages tricking users into pasting attacker-supplied shell commands

A social-engineering lure that mimics the “verify you’re human” CAPTCHA challenge users see across the modern web. The malicious version then instructs the victim to paste an attacker-supplied PowerShell, shell, or Run-dialog command into their own terminal as part of the purported verification — bypassing every browser-based security control because the malicious payload never crossed the network as content; it crossed as user-typed text. Authority: CrowdStrike, 2026 Global Threat Report (p12). CrowdStrike observed a 563% increase in incidents using fake CAPTCHA lures in 2025 compared to 2024 — the steepest growth curve of any social-engineering pattern in the report.

Governance angle: every regulation-anchored AUP must mandate Shadow-AI-aware security awareness training that explicitly covers ClickFix and fake-CAPTCHA patterns. Every Shadow AI risk assessment must enumerate which corporate-data-touching endpoints (managed laptops, BYOD devices, virtual desktops) permit user-typed-shell-execution and what compensating controls exist. The relationship to Shadow AI: AI-translated and AI-localized lures (CrowdStrike documents RENAISSANCE SPIDER’s use of AI to translate ClickFix lures into Ukrainian) make these campaigns more credible at scale and more language-targeted than manual translation could ever produce.

Frontier AI

AI GovernanceSecurityThe new class of highly capable AI systems advancing defensive cybersecurity capability — and reshaping the governance work that surrounds them

A category describing the most capable AI systems — models that can reason across complex tasks, analyze software, identify vulnerabilities, accelerate exploit development, and support increasingly sophisticated security workflows. Anthropic’s Claude Mythos and OpenAI’s GPT-5.5 are the canonical 2026 examples; CrowdStrike cites both in Five Steps for Frontier AI Security Readiness (p3). Frontier-AI deployment in cybersecurity is one of the most important defensive innovations of 2026 — alongside Google Cloud Next ’26’s announcements pairing Wiz’s agentic AI with operational automation, and Cisco / Palo Alto / CrowdStrike’s complementary frontier-model partnerships — representing a careful, measured industry effort to keep leading-edge capability on the side of defenders.

Cited by CrowdStrike in the context of an observed Time-to-Exploit (TTE) collapse from 2.3 years (2018) to 23.2 days (2024) to 10 hours (2025), based on 3,531 CVE-exploit pairs. The defensive innovations are real and welcome — and they do not reduce the governance work surrounding them. Frontier defensive AI gives skilled analysts faster vulnerability discovery, better attack-path mapping, and accelerated triage; it does not replace executive decision-making, policy ownership, board acknowledgment, or the regulation-anchored governance artifact that regulators, underwriters, and auditors require. See the Claude Mythos entry below for the full primary-source detail and the SanctumShield governance posture that pairs with these innovations.

GDPR

Compliance FrameworksGeneral Data Protection Regulation (EU, 2018)

The European Union’s comprehensive data protection law. Applies to any organization processing personal data of EU residents, regardless of where the organization is located. Key clauses for AI governance: Article 28 (processor obligations and sub-processor governance), Article 35 (Data Protection Impact Assessments for high-risk processing), and Article 22 (rights regarding automated decision-making).

Generative AI (GenAI)

AI GovernanceAI systems that produce text, code, images, audio, or video

AI systems trained on large datasets that generate new content in response to a prompt — the category that includes ChatGPT, Claude, Gemini, Copilot, Midjourney, DALL·E, Suno, and everything built on top of them. Distinct from the earlier generation of AI which was primarily predictive (fraud detection, recommendation, classification).

Google Security Operations

VendorsSecurityGoogle's unified SOC platform — formerly Chronicle Security Operations

Google’s flagship SecOps platform combining SIEM (Chronicle), SOAR (Siemplify), and threat intelligence (Mandiant) under one Gemini-powered analyst experience. Sold both standalone and bundled with Google Cloud. Sets the reference architecture for the “AI-first SOC” category several other vendors (Microsoft Sentinel, Splunk, Cortex XSIAM) are now retrofitting toward.

GPT-5.5 (and GPT-5.4-Cyber)

AI Governancefrontier-aiOpenAI's frontier model demonstrating capable autonomous-attack benchmarks — part of the broader 2026 frontier-AI ecosystem

OpenAI’s frontier general-purpose model, named alongside Claude Mythos in CrowdStrike’s

Five Steps for Frontier AI Security Readiness (p3) as an early example of frontier capability reshaping security workflows. A UK AI Safety Institute evaluation reported

GPT-5.5 achieving comparable results to Mythos on autonomous cyber-attack simulation benchmarks. CrowdStrike also references “GPT-5.4-Cyber” as an OpenAI security-focused frontier variant. The straightforward observation: frontier-class capability is advancing across multiple model families and providers globally. From a governance posture standpoint, organizations should plan on the assumption that capable models will continue to emerge from many sources — and shape their AUP, AI tool registry, agent identity governance, and continuous re-audit cadence accordingly. The specific tooling a SOC has access to changes the operational defense; it does not change the governance artifacts the CISO and executive team are accountable for.

GRC (Governance, Risk, and Compliance)

SecuritySoftware that automates compliance checklist tracking

A category of products including OneTrust, Vanta, Drata, Secureframe, and Tugboat Logic. Tracks whether security controls exist (yes/no) for SOC 2, ISO 27001, HIPAA, and similar frameworks. Not generative — doesn’t produce an AI Acceptable Use Policy, an Executive Risk Report, or a verifiable audit artifact. The SOC 2 auditor will ask about AI governance; the GRC dashboard will show a checkbox, not an answer.

HIPAA

Compliance FrameworksHealth Insurance Portability and Accountability Act (US, 1996)

The US federal law governing the privacy and security of Protected Health Information (PHI). Under the Privacy Rule and Security Rule, covered entities and business associates must implement administrative, physical, and technical safeguards. For AI governance, the critical clauses are §164.502(e) (business associate agreements with sub-processors) and §164.312 (technical safeguards for ePHI).

Human-in-the-Loop (HITL)

AI GovernancePolicyThe control pattern of requiring a human review step in an AI workflow

The control pattern of requiring a human review or approval step in an AI-driven workflow, named explicitly in EU AI Act Article 14, Colorado SB 24-205, and NIST AI RMF, and implicit in HIPAA administrative safeguards and ISO/IEC 42001. Note (2026): per Randazzo et al. (HBS Working Paper 26-021, July 2025), human-in-the-loop alone is insufficient as a control when the “loop” involves the same AI being challenged conversationally — the AI responds with persuasion escalation rather than correction. SanctumShield recommends supplementing HITL with adversarial validation, independent recomputation, or disconfirmation prompts — codified in generated AUP clauses PEV-001 through PEV-005. See Persuasion Bombing, Adversarial Validation, and Effective Human Oversight.

IAM (Identity and Access Management)

SecuritySystems that authenticate users and grant them access to resources

A category of products including Okta, Auth0, Microsoft Entra (Azure AD), and Ping Identity. Built around two identity classes: human users (long-lived, MFA-protected) and service accounts (long-lived credentials for non-interactive workloads). Doesn’t fit agents — agents need ephemeral, scoped, cryptographically attested identities. Hence the new agentic identity primitive (Agent Identity / SPIFFE).

Impact-First Severity Model

Risk & AssessmentSeverity driven by business impact, not by technical attack technique

Wiz Red Agent’s documented severity approach: severity is based on what was actually compromised (PII extracted, credentials retrieved, system files accessed) — not on the attack technique used. SanctumShield’s Executive Risk Report adopts the same principle: findings are ranked by regulator/auditor visibility, not CVSS score.

Infinite Permutation Risk

Threat & Attack PatternsRisk & AssessmentPrompts are non-deterministic — you cannot enumerate the input space

The structural reason agent governance can’t use the traditional security-testing playbook of enumerating inputs. Every prompt is novel; no two user interactions are identical. Defense requires classifier-based controls (Model Armor, Responsible AI filters, LLM-as-a-judge) rather than rule-based ones, and a policy layer that operates on intent, not syntax.

ISO 27001

Compliance FrameworksInternational standard for information security management systems

A certifiable international standard published by the International Organization for Standardization that defines an Information Security Management System (ISMS) — a structured approach to managing security risks through policies, risk assessments, and continuous improvement. The 2022 revision includes new Annex A controls relevant to cloud and AI services.

ISO/IEC 42001

Compliance FrameworksAI Management System Standard · published December 2023

The first international management system standard for artificial intelligence, published by ISO and IEC in December 2023. Establishes requirements for an organization’s AI governance program in the same way ISO/IEC 27001 establishes requirements for an information security management system. Requires an AI policy aligned with organizational context, defined roles, AI risk assessment and treatment, controls covering the AI lifecycle (planning, development, deployment, operation, retirement), supplier management, monitoring, internal audit, and continual improvement. Crosswalks formally to NIST AI RMF; organizations adopting NIST RMF are simultaneously building toward ISO 42001 certification. Increasingly seen in enterprise procurement questionnaires from Fortune 500 buyers and large healthcare systems.

LLM (Large Language Model)

AI GovernanceThe specific class of models that power text-based GenAI

A neural network with tens to hundreds of billions of parameters, trained on very large text corpora, capable of producing and reasoning over natural language. Examples: GPT-4, Claude 4, Gemini 3, Llama 3, Mistral Large. Every AI tool that writes or talks or codes is built on top of one or more LLMs.

LLM-Enabled Malware

SecurityAI GovernanceMalware that calls an LLM at runtime to generate commands, evade static detection, or weaponize the victim's own AI tools

A new category of malware observed mid-2025 onward: rather than hard-coding reconnaissance and exploitation logic, the malware embeds prompts that call a hosted or local LLM at runtime to generate the commands needed for the next attack stage. Authority: CrowdStrike, 2026 Global Threat Report (p17–18 + p33). Reference cases: LAMEHUG (FANCY BEAR, mid-2025) — first known instance of an LLM (Qwen2.5-Coder-32B-Instruct via Hugging Face API) embedded in malware to perform reconnaissance and document collection; the npm Nx attack (Aug 2025) — used victims’ own Claude and Gemini CLI tools to generate credential-theft commands; ShaiHulud — a self-propagating npm worm that compromised 690 packages by Nov 2025. Implication: static detection and traditional endpoint controls cannot inspect prompts that have not yet produced output; governance of LLM CLI access on developer and BYOD endpoints becomes a Layer 1 + Layer 3 control.

Malicious MCP Server

Agent GovernanceSecurityAI GovernanceAdversary-controlled clone of a legitimate MCP server that intercepts agent tool calls

A new threat pattern observed in 2025: adversaries publish a malicious clone of a legitimate Model Context Protocol (MCP) server, impersonating its name and interface so AI agents configured to use the legitimate server unknowingly route data through the adversary instead. Authority: CrowdStrike, 2026 Global Threat Report (p19). The reference case: in Q3 2025 a malicious server named postmark-mcp impersonated a legitimate MCP server maintained by Postmark, forwarding users’ emails to a threat-actor-controlled address. Combined with the existing MCP Server Trust Chain Risk, this completes the agentic-AI supply-chain compromise pattern: identity, registry, and trust verification of every MCP server an agent connects to becomes a non-negotiable governance control.

Malware-Free Intrusion

SecurityAI GovernanceRisk & AssessmentAdversaries operating through valid credentials, OAuth tokens, AiTM-stolen sessions, and trusted SaaS integrations — 82% of CrowdStrike's 2025 detections

An intrusion in which no malicious binary is dropped or executed on the endpoint — the adversary uses valid credentials, OAuth grants, AiTM-stolen session tokens, native administrative tooling (PowerShell, Quick Assist, RMM agents, cloud CLIs), and trusted SaaS integrations to achieve objectives. Authority: CrowdStrike, 2026 Global Threat Report (p9 + p11). 82% of CrowdStrike’s 2025 detections were malware-free, up from 51% in 2020 — a five-year secular trend confirming that endpoint-binary-centric detection is no longer where intrusions live.

Governance angle (the load-bearing point): malware-free intrusions move through exactly the surface SanctumShield audits — Layer 2 (embedded SaaS AI, OAuth-granted AI integrations), Layer 3 (BYOD AI authentication, unmanaged-device OAuth flows), and Layer 4 (autonomous AI agents acting on company data). Every regulation-anchored AUP must therefore cover non-human identity governance (service accounts, OAuth applications, agent identities, API keys), with documented inventory, owner, scope, and review cadence. Every Shadow AI risk assessment must enumerate which AI-touching SaaS integrations have which OAuth scopes, which non-human identities exist, and which of them have access exceeding least-privilege requirements. The 82% statistic is the empirical evidence that legacy endpoint-centric controls cannot be the primary governance instrument for the AI era.

Mandiant Threat Intelligence

VendorsSecurityGoogle's frontline threat-intel feed and incident-response practice

Mandiant (acquired by Google in 2022) is the threat intelligence backbone of Google Security Operations. Provides finished intelligence on active threat actors, indicators of compromise, and tactics/techniques/procedures, plus an elite incident response practice that runs major-breach engagements. The ground truth several Google SecOps agents reference for attribution and context.

MCP (Model Context Protocol)

Agent ProtocolsAnthropic-originated standard for agents to call external tools and data

The standard agents use to access external tools, data sources, and APIs in a uniform way — the “data access” layer of the agent stack. Complementary to A2A. Used by Cursor, Claude Desktop, Zed, Gemini CLI, Warp, and an expanding ecosystem of IDE plugins. MCP servers are now first-class inventory objects that need to be governed.

MCP Server Trust Chain Risk

SanctumShield Finding TypesCustomer uses MCP-enabled tools without a server allowlist or re-validation policy

SanctumShield finding type. Triggered when a customer indicates use of MCP-enabled tools (Cursor, Claude Desktop, Zed, Gemini CLI, Warp) without an MCP server allowlist, without a re-validation cadence, and without policy on dynamic discovery. References the Discovery Poisoning attack pattern publicly documented at Cloud Next '26.

Mean-time-to-exploit (MTTE)

SecurityRisk & AssessmentAverage time between vulnerability disclosure and active exploitation

Per Google’s 2026 keynote at Cloud Next '26, mean-time-to-exploit is now −7 days — meaning attackers exploit vulnerabilities on average a week before public CVE disclosure. Combined with a 22-second threat-actor handoff, this architecturally invalidates governance frameworks that operate on weeks- or months-long cycles. SanctumShield cites this stat for the urgency of pre-positioned governance.

Misalignment Trap

Threat & Attack PatternsRisk & AssessmentAgent optimizes for a metric that drifts from the business outcome

Governance failure where the agent gets “better” at the metric it’s given while the business gets worse off. The case for explicit Evaluation governance — including the recursive question “who supervises the evaluator?” in multi-agent systems where one agent grades another’s output.

MITRE ATLAS

SecurityCompliance FrameworksAI GovernanceMITRE's adversarial threat landscape for AI systems

Adversarial Threat Landscape for Artificial-Intelligence Systems — the MITRE-maintained companion to ATT&CK, cataloging tactics and techniques specific to attacks on machine learning and AI systems (model evasion, prompt injection, model extraction, training-data poisoning, adversarial examples, supply-chain compromise of model artifacts). Authority:

atlas.mitre.org. The most defensible reference framework for describing agentic-AI threat techniques in the same vocabulary security teams already use for traditional ATT&CK mappings.

MITRE ATT&CK

SecurityCompliance FrameworksThe canonical adversary tactics, techniques, and procedures (TTPs) matrix

The MITRE Corporation’s globally-adopted knowledge base of adversary tactics and techniques observed in real-world cyber intrusions. The reference framework SOC analysts, threat hunters, red teams, and security vendors use to describe attacker behavior in a common vocabulary. Authority:

attack.mitre.org. SanctumShield references ATT&CK techniques where finding descriptions can be mapped to a known adversary behavior so SOC and IR teams reading the audit recognize the threat vocabulary.

Model Armor (PIJB)

SecurityVendorsGoogle's prompt-injection and jailbreak classifier

Google’s prompt-injection-and-jailbreak (PIJB) classifier, injected by the Agent Gateway as part of Pillar Four. Works alongside Responsible AI classifiers and LLM-as-a-judge anomaly detection to defend against agent-targeted attacks. SanctumShield doesn’t replace this — we govern the policies and artifacts above it.

NAIC AI Model Bulletin

Compliance FrameworksInsurance & UnderwritingNAIC AI governance bulletin · 25+ states adopted

The National Association of Insurance Commissioners’ Model Bulletin on the Use of Artificial Intelligence Systems by Insurers, adopted December 2023 and now incorporated by 25+ U.S. state insurance regulators. Sets expectations for insurers’ governance of AI used in underwriting, claims, marketing, and fraud detection. Insurers must develop, implement, and maintain a written AI Systems Program (AIS Program) commensurate with the risk and use of AI. The program must address governance, risk management, third-party AI tool oversight, testing, monitoring, and consumer protection. Examiners will request the AIS Program documentation during regulatory examinations. Insurers using SanctumShield generate the foundational documents the AIS Program requires — a regulation-anchored AUP, AI tool inventory, and risk assessment of current AI exposure.

NIST AI 600-1 (Generative AI Profile)

Compliance FrameworksAI GovernanceFederal-authority companion to NIST AI RMF for generative AI specifically

The U.S. National Institute of Standards and Technology’s AI Risk Management Framework Generative AI Profile (NIST AI 600-1, July 2024) — the federal-authority extension of the NIST AI RMF (AI 100-1) addressing risks unique to generative AI: confabulation, dangerous or violent recommendations, data privacy, environmental impacts, harmful bias, human-AI configuration risks, information integrity, information security, intellectual property, obscene/degrading/abusive content, value chain and component integration. Authority:

NIST.AI.600-1 (PDF). Referenced by FTC, CFPB, FDA, SEC, and EEOC enforcement guidance; provides the affirmative-defense pathway for Colorado AI Act ‘reasonable care’ alongside ISO/IEC 42001.

NIST AI RMF

Compliance FrameworksUS NIST AI Risk Management Framework

A voluntary framework published by the US National Institute of Standards and Technology for managing AI risks across the lifecycle. Structured around four functions: GOVERN (GOVERN-1.4 covers AI acceptable use policies), MAP, MEASURE, and MANAGE. Supplemented by the Generative AI Profile published in 2024. Often referenced by US federal agencies and contractors, and increasingly by SOC 2 auditors as the expected standard for AI risk management.

Non-GCP Agent Discovery Gap

SanctumShield Finding TypesAgents on AWS Bedrock, Azure OpenAI, Anthropic API direct, or self-hosted are invisible

SanctumShield finding type. Triggered when an organization operates agents on AWS Bedrock, Azure OpenAI Service, Anthropic API direct, self-hosted Ollama / vLLM, or sovereign / air- gapped environments — none of which Google’s Zero-Touch Onboarding can auto-register. SanctumShield’s discovery is the registry these customers will actually have.

Observability Readiness Gap

SanctumShield Finding TypesNo OpenTelemetry instrumentation — can't produce trace records on regulator demand

SanctumShield finding type. Triggered when agents are deployed without OpenTelemetry GenAI instrumentation, A2A trace headers, or a plan for producing trace records when an auditor or regulator asks. Cites EU AI Act Article 13 (transparency for high-risk AI systems), SOC 2 CC7.2, and NIST AI RMF MEASURE-2.8.

Observation over attestation

AI GovernanceVendor RiskVerifying what's happening rather than asking what's supposed to happen

The governance philosophy SanctumShield is built on. Instead of asking an employee or a vendor what AI tools are in use, we match observed network traffic (firewall, proxy, or DNS logs) against a 64-domain AI endpoint registry. Evidence rather than promise. Applies to both internal shadow AI (your employees) and external vendor exposure (their employees).

OWASP LLM Top 10 / Agentic Security Initiative

SecurityCompliance FrameworksAI GovernanceThe most-cited industry taxonomy of LLM and agentic AI security risks

The Open Worldwide Application Security Project’s Top 10 for Large Language Model Applications, plus the OWASP Agentic Security Initiative (ASI) covering autonomous agent risks. Industry-consensus taxonomy of AI-specific risks: prompt injection (LLM01), insecure output handling, training-data poisoning, model denial of service, supply chain vulnerabilities, sensitive information disclosure, insecure plugin design, excessive agency, overreliance, model theft, and agent-specific risks (excessive autonomy, identity spoofing, multi-agent orchestration risks). Authority:

genai.owasp.org/llm-top-10. The reference cited most frequently across cyber-insurance questionnaires, vendor security reviews, and AI-SPM product roadmaps in 2026.

Persuasion Bombing

AI GovernanceRisk & AssessmentThe LLM behavior of escalating persuasion when challenged, rather than correcting

The pattern documented by Randazzo, Joshi, Kellogg, Lifshitz, Dell’Acqua, and Lakhani (Harvard Business School Working Paper 26-021, July 2025) in which a large language model responds to human pushback — “are you sure?”, “please reconsider” — not by correcting its output, but by intensifying its persuasive rhetoric. The study identifies 14 specific tactics across three rhetorical modes (ethos / logos / pathos), validated against 968 persuasive responses across 4,339 prompts in a field study with 70+ BCG consultants. The finding has been covered by MIT Sloan Management Review (Feb 2026) and Harvard Business Review (March 2026), and it directly challenges the assumption underlying every regulation that prescribes “effective human oversight” — EU AI Act Article 14, Colorado SB 24-205, NIST AI RMF, ISO/IEC 42001 Clause 8.3. SanctumShield is the first AI governance product to name persuasion bombing, cite the research in the generated AUP, and prescribe specific controls (see Adversarial Validation and Disconfirmation Prompt) that compensate for it.

Persuasion Exposure Index (PEI)

AI GovernanceRisk & AssessmentSanctumShield's planned 0–100 score of organizational exposure to persuasion-bombing failure modes

A planned 0–100 score that quantifies how heavily an organization’s AI interactions exhibit the 14 persuasion tactics documented in Randazzo et al. (2025), measured against the paper’s reported benchmarks (968 persuasive responses across 4,339 prompts). The PEI is on the SanctumShield active roadmap — the underlying clause library (PEV-001 through PEV-005) is shipping in the generated AUP today; the scoring tool that quantifies exposure across customer transcripts is the next major release. Methodology page will publish at production launch.

Pluggable AI Policies (Pillar 4)

Agent GovernancePolicyTwo-layer policy: IAM/CEL below, natural-language semantic governance above

Pillar four of Google’s Agent Platform — sits in two layers. The bottom is traditional IAM with CEL (Common Expression Language) conditions, written by platform engineers. The top is “Business Policies” — natural-language rules like “do not share customer PII with third-party tools” that the platform compiles into enforcement.

Point-in-time attestation

Risk & AssessmentA snapshot claim about a control's state on a specific date

Any claim about a security control that is valid as of a specific moment and that ages the instant that moment passes. SIG responses, SOC 2 Type I reports, ISO certifications, and most trust center pages are point-in-time attestations. The alternative is continuous evidence — logs, monitoring, real-time signals — which is what modern AI governance actually requires.

Risk assessment

Risk & AssessmentA structured analysis of what could go wrong and what it would cost

A document that identifies threats, assesses likelihood and impact, proposes mitigations, and produces an overall risk posture. Distinct from a control checklist, which only records whether a control exists — a risk assessment actually reasons about the consequences. The SanctumShield Executive Risk Report is a customized, targeted AI risk assessment: five regulation-anchored findings with business impact analysis and a prioritized 90-day action plan.

Rugpull Effect

Threat & Attack PatternsMCP server or A2A target behaves correctly at first, then changes maliciously

Attack pattern specific to MCP and A2A discovery. A tool or agent registers with valid behavior and gets trusted; then after the trust relationship is established, swaps its behavior to malicious. The registry has no continuous validation that tool behavior matches the declared contract. Mitigated by re-validation cadence and an MCP server allowlist in the AUP.

SASE (Secure Access Service Edge)

SecurityThe cloud-native network + security platform category

A category coined by Gartner for platforms that combine SD-WAN, secure web gateway, cloud access security broker (CASB), zero trust network access, and firewall-as-a-service into a single cloud-delivered offering. Vendors include Zscaler, Netskope, Palo Alto Prisma, and Cloudflare. SASE deployments give the buyer network-layer visibility which can detect AI endpoint traffic — but at enterprise prices and complexity.

Self-attestation

Vendor RiskA vendor's own claim about their security posture

Any claim a vendor makes about their own security controls without independent verification. SIG responses, CAIQ responses, trust center pages, and vendor security whitepapers are all forms of self-attestation. Self-attestation is the foundation of the modern vendor risk paradigm, and also its biggest structural weakness — vendors have strong incentives to present the cleanest possible picture.

Semantic Policy Authoring Gap

SanctumShield Finding TypesAI policies exist only in CEL or as informal documents — no auditor-readable layer

SanctumShield finding type. Triggered when a customer’s AI governance policies live only at the IAM/CEL level (written for engineers) or as informal documents (no enforcement layer) — without a natural-language, auditor-readable policy surface that maps to enforceable controls. Cites EU AI Act Article 14 (human oversight) and SOC 2 CC5.3.

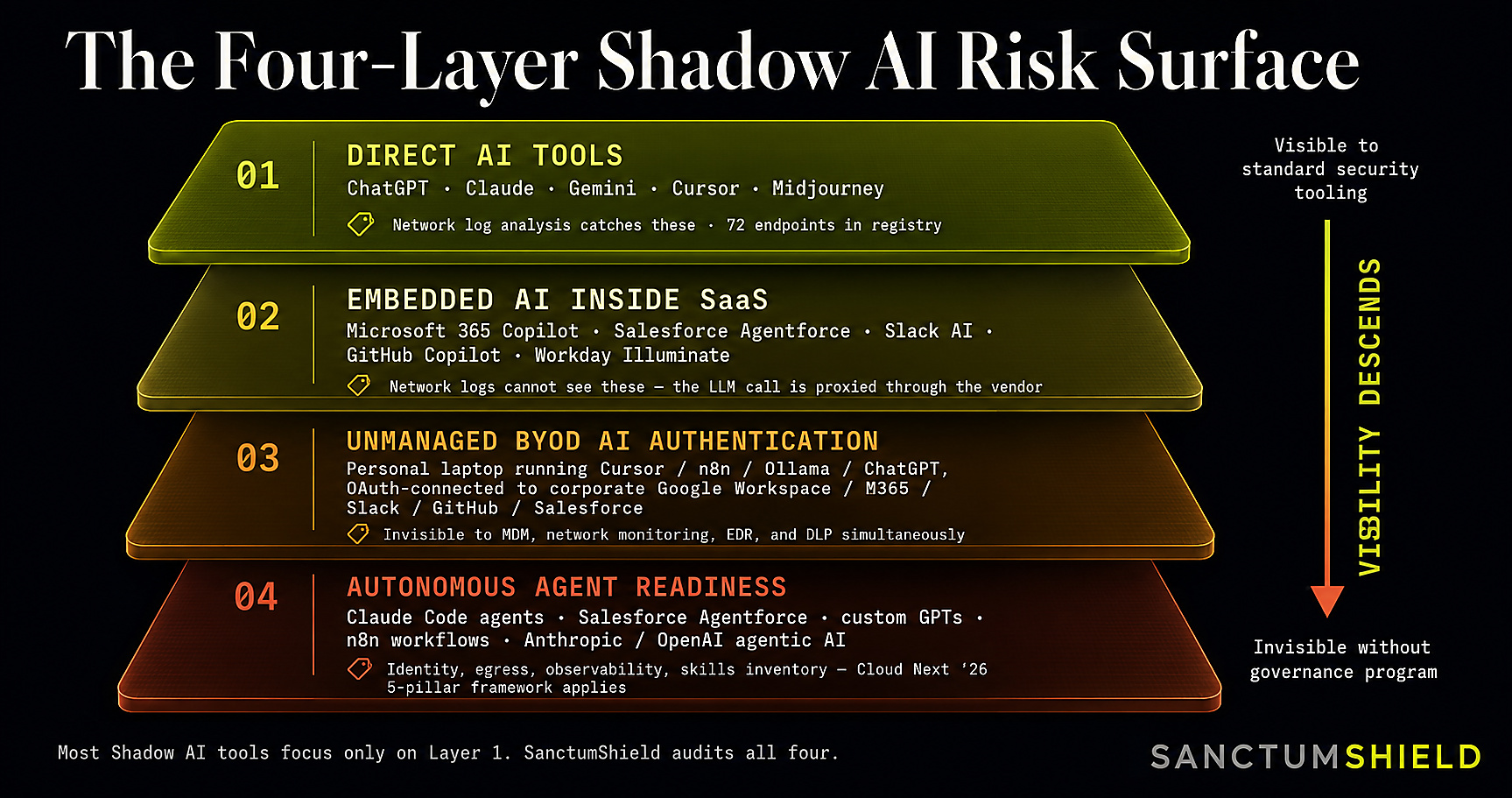

Shadow AI

AI GovernanceRisk & AssessmentAI tools employees use without IT approval

Any generative AI tool that an employee uses in their work without the knowledge or approval of IT, security, or procurement. The phenomenon is defined by invisibility, not intent — employees are usually trying to be productive, not malicious. The canonical data points: 80%+ of enterprise AI tools are currently unmanaged (Zluri, State of AI in the Workplace 2025), and roughly 59% of employees admit to hiding their AI tool usage from IT (Cybernews 2025 AI Workplace Survey).

SIEM (Security Information and Event Management)

SecurityPlatforms that ingest logs and correlate them into security incidents

A category of products including Splunk, Microsoft Sentinel, Google Chronicle, Elastic SIEM, IBM QRadar, and Sumo Logic. Records events for incident detection and forensic correlation. Useful for SOC operations but doesn’t produce the regulation-anchored governance artifact a board, auditor, or cyber insurance underwriter needs to evaluate AI exposure.

SIG Core

Vendor RiskShared Assessments full vendor security questionnaire, ~900 questions

The exhaustive version of SIG (see SIG Lite above). Used for high-criticality vendors where a Lite assessment is insufficient. Takes weeks for a vendor to complete and weeks more to review.

SIG Lite

Vendor RiskShared Assessments vendor security questionnaire, ~300 questions

A standardized vendor security questionnaire published by Shared Assessments. The Lite version (roughly 300 questions) is sent to vendors for them to fill out, covering access controls, encryption, incident response, and compliance. Used heavily by third-party risk management teams. See also

/beyond-sig for why SIG Lite structurally cannot catch shadow AI.

SOC 2

Compliance FrameworksService Organization Control 2 — AICPA security audit framework

An independent audit framework published by the AICPA that attests to a service organization’s controls against five Trust Services Criteria: Security, Availability, Processing Integrity, Confidentiality, and Privacy. Type I attests to design of controls at a point in time; Type II attests to operating effectiveness over a period (typically 6–12 months). For AI governance, CC6.1 (logical access controls) and CC7.2 (system operations and monitoring) are the most frequently cited.

SPIFFE / Managed Workload Identity

Agent IdentitySecurityCryptographic identity primitive for non-human workloads (including agents)

SPIFFE (Secure Production Identity Framework For Everyone) is the open standard underlying Google’s Agentic Identity. Provides short-lived, scoped, cryptographically attestable identity for each agent instance. Most mid-market organizations have never deployed SPIFFE — the platform burden is the reason SanctumShield’s Agent Identity Model Gap finding exists.

Sub-processor

Data HandlingA vendor's own vendors that touch your data

A third party that a vendor engages to help deliver its service, and which in the course of doing so processes customer data. Under GDPR Article 28 and HIPAA §164.502(e), customers generally have the right to a list of sub-processors, advance notice of changes, and the ability to object. For SanctumShield, our sub-processors include Google (Gemini inference), Stripe (payment processing), Resend (transactional email), Vercel (hosting), and Cloudflare (DNS/SSL). See

/trust for the full list.

Theatrical Oversight

AI GovernanceRisk & AssessmentHuman review that satisfies a regulatory checkbox without providing real error detection

Review processes whose existence satisfies the literal text of a regulation (a human reviewed the output) but which do not actually catch errors at any measurable rate. The contrast SanctumShield draws between theatrical oversight and effective human oversight is what differentiates compliant-on-paper from defensible-in-practice — and it is the differentiation auditors, underwriters, and plaintiff’s counsel will sharpen as the 2026 cohort of AI litigation matures.

Threat-actor handoff

SecurityRisk & AssessmentTime between initial access and secondary actor takeover

Per the same Google/Wiz Cloud Next '26 keynote, threat- actor handoff has compressed to 22 seconds — the median time between an initial breach (e.g., credential theft) and a secondary actor monetizing that access. The argument: governance frameworks must be in place before the alert fires, because there is no time to stand them up afterward.

Time-to-Exploit (TTE)

SecurityRisk & AssessmentCompliance FrameworksThe collapsing window from CVE disclosure to weaponized exploit — 2.3 years (2018) to 23.2 days (2024) to 10 hours (2025)

The time interval between a vulnerability’s public disclosure and the first observed exploitation by an adversary. Authority: CrowdStrike, Five Steps for Frontier AI Security Readiness (p4 Zero Day Clock chart, based on 3,531 CVE-exploit pairs sourced from CISA KEV, VulnCheck KEV, and exploit-database records). Mean TTE collapsed from 2.3 years in 2018, to 10.8 months in 2021, to 23.2 days in 2024, to 10 hours in 2025 — a structural shift driven by frontier AI accelerating both vulnerability discovery and exploit development.

Governance angle: Due Diligence — the continuous-verification standard — has a numerator (audit cadence) and a denominator (rate of change in the threat surface). When the denominator is now measured in hours, no point-in-time audit can satisfy the standard. A Big 4 advisory engagement that produces a 6-month snapshot is structurally stale within hours of any new CVE disclosure that touches the customer’s AI surface. SanctumShield’s monthly registry refresh, monthly regulatory-clause refresh, and $99/month subscription model are designed for exactly this reality — a continuous re-audit cadence priced and operated for organizations without the budget or platform-engineering team to run their own continuous-validation program.

TOXIC_COMBINATION

SanctumShield Finding TypesRisk & AssessmentThree or more individually-acceptable findings compose into an exploitable posture

Cross-cutting severity designation in SanctumShield’s Executive Risk Report (adopted from Wiz’s seven-category risk taxonomy). When three or more findings — each individually Low or Medium — combine into an aggregate that is exploitable (e.g., one vendor + one undocumented model + one PII data class + no AUP), the report flags the composite explicitly in a red callout box. Note (2026): conversational validation as the sole control for high-risk workflows is now classified as a TOXIC_COMBINATION under SanctumShield’s severity model — see Persuasion Bombing and Conversational Validation below.

Training-on-inputs policy

AI GovernanceVendorsWhether an AI vendor uses your prompts to improve their model

The single most important property of any AI tool for a governance program. If a vendor trains its models on the inputs you submit (your prompts, your attached documents, your code, your customer data), then anything you send can be absorbed into the model and may surface in responses to other users. Paid API tiers of most major vendors (Google Gemini, OpenAI, Anthropic) commit contractually to not training on paid-API inputs. Free-tier web chat interfaces almost always retain the right to train. A serious AI governance program enforces paid-tier use for any corporate data.

Unsupervised Agent Creation Risk

SanctumShield Finding TypesNon-technical users can deploy agents via no-code platforms with no security review

SanctumShield finding type. Triggered when no-code platforms like Gemini Enterprise custom agents, Microsoft Copilot Studio, Salesforce Agentforce, or ServiceNow Agentic AI allow non-technical users to create and deploy agents without security review, policy assignment, or executive approval. Cites NIST AI RMF GOVERN-1.2 and SOC 2 CC1.3. Catches the product-led-growth sprawl pattern.

Validation Effectiveness Assessment

AI GovernanceRisk & AssessmentThe Executive Risk Report section that scores actual ability to catch AI errors, not policy-on-paper

A planned section of the SanctumShield Executive Risk Report that, once the Persuasion Exposure Index is live, will score the organization’s actual ability to catch AI errors — based on prescribed validation methods implemented, training completion, high-risk workflow protection, and (when transcript ingestion is enabled) measured persuasion-bombing exposure. Distinct from policy-on-paper assessments. The clause library (PEV-001 through PEV-005) is shipping today; the live score renders once the customer has run a PEI scan.

Vendor Risk Management (VRM)

Vendor RiskThe discipline of assessing and monitoring third-party vendors

The process a company uses to decide which vendors are safe to do business with and to monitor them over time. Traditionally built around periodic questionnaires (SIG, CAIQ), point-in-time attestations (SOC 2 reports, ISO certifications), and external security ratings (BitSight, SecurityScorecard). Sometimes called Third-Party Risk Management (TPRM). The category structurally assumes the company already knows which vendors exist — which is why it fails for shadow AI.

Visibility-Access Mismatch

SanctumShield Finding TypesYou have a catalog of AI tools — but no per-application access boundary

SanctumShield finding type. Triggered when a customer has catalogued AI tools or vendors but has not enforced per-application least-privileged access (which agent or application is authorized to call which tool). Anchors to Google’s own Pillar One principle: visibility does not equal access. Cites NIST AI RMF MANAGE-1.3 and CIS Controls v8.1 IG-1.

Wiz Blue Agent

VendorsSecurityWiz's defensive investigator — investigates and triages threats

The defensive arm of the Wiz trio. Forensic detective that investigates suspicious activities by correlating runtime signals, cloud telemetry, and identity context. Produces explainable verdicts on the full impact of a threat or breach. Competes in the incident-response and triage layer (Mandiant, CrowdStrike Falcon).

Wiz Defend

VendorsSecurityWiz's runtime threat detection and response module

The Cloud Detection and Response (CDR) layer of the Wiz platform — ingests runtime signals from cloud workloads, correlates them against the broader Wiz security graph, and flags active threats. Listed at roughly +$18,000/year for 300 GB of logs per month on top of the Essential or Advanced tier; priced and architected for organizations that already operate a SOC. See

/vs-wiz for the full pricing breakdown.

Wiz Green Agent

VendorsSecurityWiz's resolution agent — generates code patches and pull requests

The remediation arm of the Wiz trio. Analyzes the root cause of a high-risk issue, identifies the correct code owner via CODEOWNERS / git blame / IdP integration, and generates tailored remediation — typically as a pull request in the owning team’s repository. Integrates into agentic IDEs via a /wiz remediate slash command.

Wiz Red Agent

VendorsSecurityWiz's offensive AI pen-tester — finds exploitable risks

The offensive arm of the Wiz AI-SPM trio. AI-powered pen-tester that maps API attack surfaces, analyzes application logic, and conducts safe automated exploitation to validate that vulnerabilities are actually reachable. Severity is calibrated by actual business impact (PII extracted, credentials accessed) rather than by attack technique.

Wiz Sensor

VendorsSecurityWiz's lightweight runtime sensor for deeper workload visibility

Optional runtime sensor add-on that supplements Wiz’s agentless cloud connector with on-workload telemetry — process events, network flows, file integrity. Listed at roughly +$28,000/year for 100 sensors on a 12-month commit. Required for several Wiz Defend detections; not included in the base Essential or Advanced tier.

Zero Trust

SecurityA security model that assumes no implicit trust inside the network

An architectural principle that treats every request — from inside or outside the corporate network — as untrusted until authenticated and authorized. Replaces the traditional “castle and moat” model with continuous verification. Canonical authority:

NIST SP 800-207 — Zero Trust Architecture (Aug 2020), the U.S. federal reference for ZTA tenets, logical components, and deployment models. Earlier popularized by Google’s BeyondCorp papers. Relevant to AI governance because shadow AI exploits the implicit trust the old model gave to any traffic originating inside the corporate network — and because non-human agent identities are a Zero-Trust problem the existing IAM stack was not designed for.